Andrej Karpathy

I like to train deep neural nets on large datasets 🧠🤖💥

history

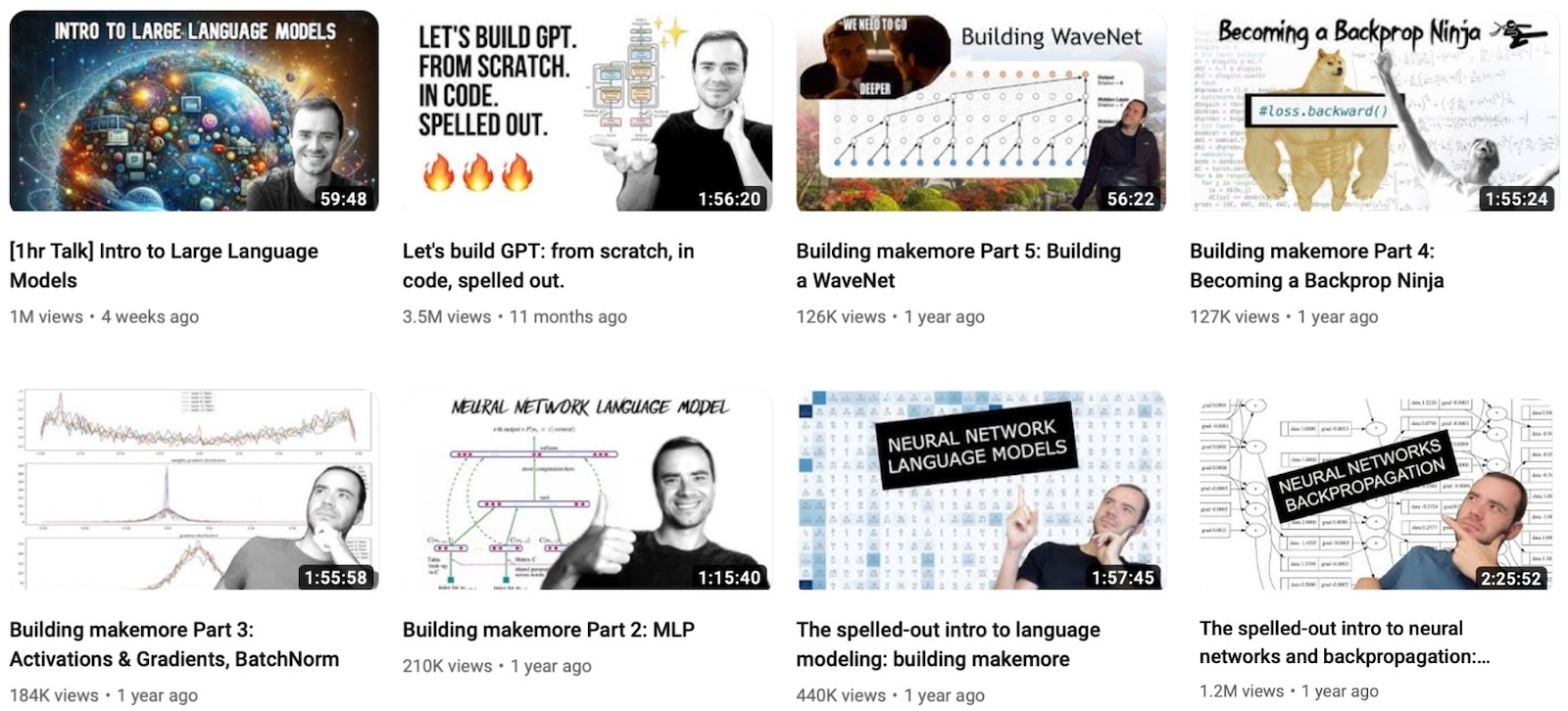

I am founder at Eureka Labs. I recently elaborated on its vision on the Dwarkesh podcast. While work on Eureka continues, I create educational videos on AI on my YouTube channel. There are two tracks.

General audience track:

- Deep Dive into LLMs like ChatGPT — on under-the-hood fundamentals of LLMs.

- How I use LLMs — a more practical guide to examples of use in my own life.

- Intro to Large Language Models — a third, parallel, video from a longer time ago.

Technical track: Follow the Zero to Hero playlist.

For all the latest, I spend most of my time on 𝕏/Twitter or GitHub.

I came back to OpenAI where I built a new team working on midtraining and synthetic data generation.

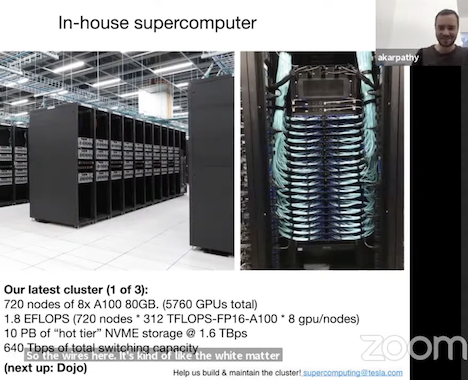

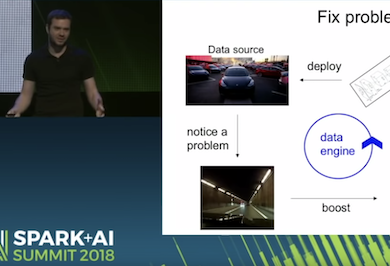

I was the Director of AI at Tesla, where I led the computer vision team of Tesla Autopilot and (very briefly) Tesla Optimus. My team handled all in-house data labeling, neural network training and deployment on Tesla's custom inference chip. Today, the Autopilot increases the safety and convenience of driving, but the team's goal is to make Full Self-Driving a reality at scale. See Aug 2021 Tesla AI Day for more.

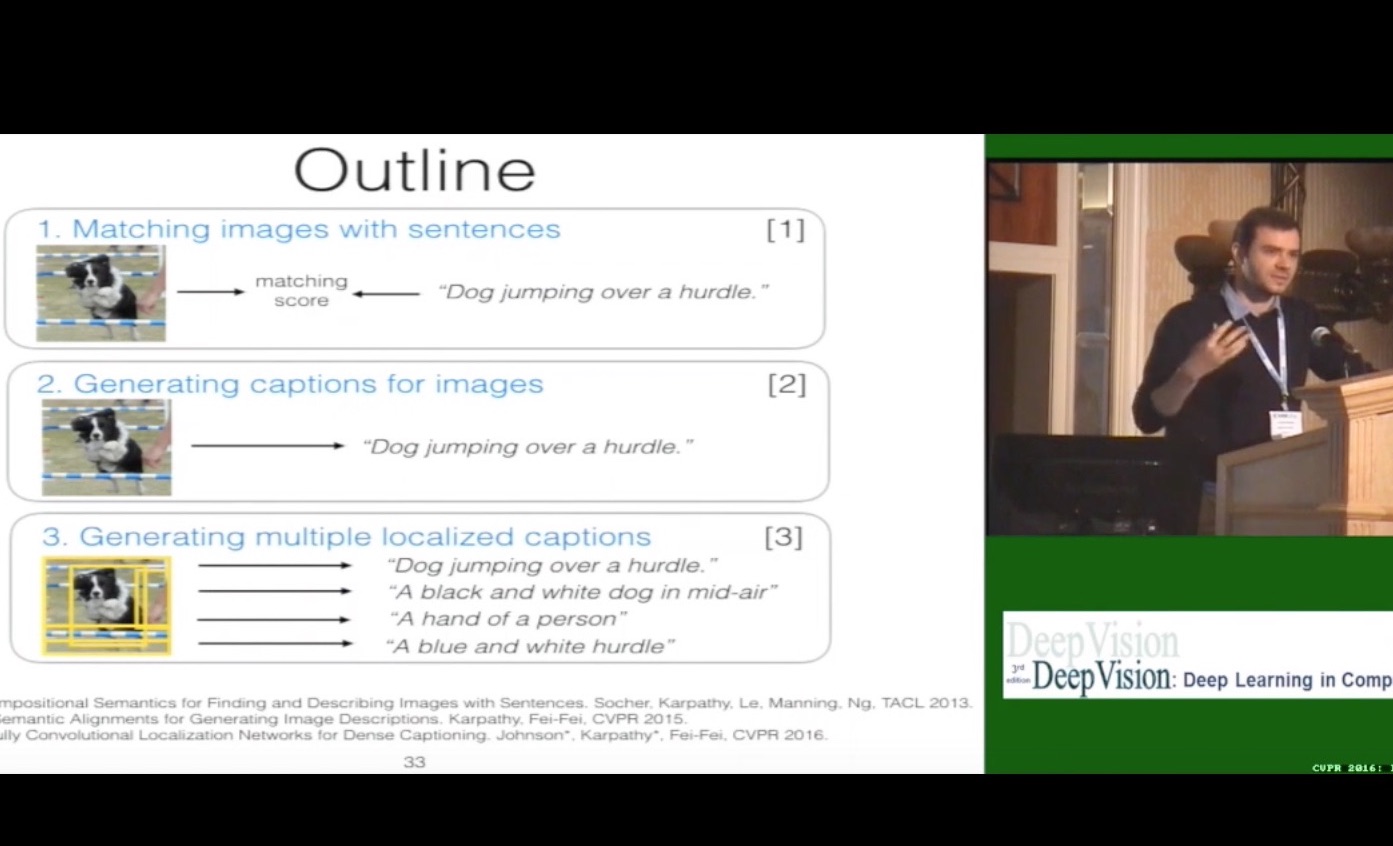

My PhD was focused on convolutional/recurrent neural networks and their applications in computer vision, natural language processing and their intersection. My adviser was Fei-Fei Li at the Stanford Vision Lab and I also had the pleasure to work with Daphne Koller, Andrew Ng, Sebastian Thrun and Vladlen Koltun along the way during the first year rotation program.

I designed and was the primary instructor for the first deep learning class at Stanford — CS 231n: Convolutional Neural Networks for Visual Recognition. The class became one of the largest at Stanford and has grown from 150 enrolled in 2015 to 330 students in 2016, and 750 students in 2017.

Along the way I squeezed in 3 internships at (baby) Google Brain in 2011 working on learning-scale unsupervised learning from videos, then again in Google Research in 2013 working on large-scale supervised learning on YouTube videos, and finally at DeepMind in 2015 working on the deep reinforcement learning team with Koray Kavukcuoglu and Vlad Mnih.

MSc at the University of British Columbia where I worked with Michiel van de Panne on learning controllers for physically-simulated figures (i.e., machine-learning for agile robotics but in a physical simulation).

BSc at the University of Toronto with a double major in computer science and physics and a minor in math. This is where I first got into deep learning, attending Geoff Hinton's class and reading groups.

bio

Andrej Karpathy is an AI researcher and founder of Eureka Labs, focused on modernizing education in the age of AI. He previously served as the Director of AI at Tesla and was a founding member of OpenAI. During his PhD at Stanford, he was the architect and lead instructor of the first deep learning course at Stanford (CS231n), which has become one of its most popular classes.

featured talks

teaching

I have a YouTube channel, where I post lectures on LLMs and AI more generally.

In 2015 I designed and was the primary instructor for the first deep learning class at Stanford — CS 231n: Convolutional Neural Networks for Visual Recognition ❤️. The class became one of the largest at Stanford and has grown from 150 enrolled in 2015 to 330 students in 2016, and 750 students in 2017.

featured writing

I have three blogs 🤦♂️. This GitHub blog is my oldest one. I then briefly and sadly switched to my second blog on Medium. I now have a Bear blog. Here is the collection of posts across all three:

- microgpt github

- 2025 LLM Year in Review bear

- Chemical hygiene bear

- Auto-grading decade-old Hacker News discussions with hindsight bear

- The space of minds bear

- Verifiability bear

- Animals vs Ghosts bear

- Vibe coding MenuGen bear

- Power to the people: How LLMs flip the script on technology diffusion bear

- Finding the Best Sleep Tracker bear

- The append-and-review note bear

- Digital hygiene bear

- I love calculator bear

- Deep Neural Nets: 33 years ago and 33 years from now github

- A from-scratch tour of Bitcoin in Python github

- Short Story on AI: Forward Pass github

- Biohacking Lite github

- A Recipe for Training Neural Networks github

- Software 2.0 medium

- AlphaGo, in context medium

- ICML accepted papers institution stats medium

- A Peek at Trends in Machine Learning medium

- ICLR 2017 vs arxiv-sanity medium

- Virtual Reality: still not quite there, again. medium

- Yes you should understand backprop medium

- A Survival Guide to a PhD github

- Short Story on AI: A Cognitive Discontinuity github

- CS183c Assignment #3 medium

- The Unreasonable Effectiveness of Recurrent Neural Networks github

- What I learned from competing against a ConvNet on ImageNet github

- The state of Computer Vision and AI: we are really, really far away github

pet projects

This list is a bit outdated, see my up to date projects on my GitHub.

./micrograd

micrograd is a tiny scalar-valued autograd engine (with a bite! :)). It implements backpropagation (reverse-mode autodiff) over a dynamically built DAG and a small neural networks library on top of it with a PyTorch-like API.

./char-rnn

char-rnn was a Torch character-level language model built out of LSTMs/GRUs/RNNs. Related to this also see the Unreasonable Effectiveness of Recurrent Neural Networks blog post, or the minimal RNN gist.

./arxiv-sanity

arxiv-sanity tames the overwhelming flood of papers on Arxiv. It allows researchers to discover relevant papers, search/sort by similarity, see recent/popular papers, and get recommendations. Deployed live at arxiv-sanity.com. My obsession with meta research involved many more projects over the years, e.g. see pretty NIPS 2020 papers, research lei, scholaroctopus, and biomed-sanity. My most recent arxiv-sanity-lite from-scratch rewrite is much better.

./neuraltalk2

neuraltalk2 was an early image captioning project in (lua)Torch. Also see our later extension with Justin Johnson to dense captioning.

./imagenet-ref

I am sometimes jokingly referred to as the reference human for ImageNet because I competed against an early ConvNet on categorizing images into 1,000 classes. This required a bunch of custom tooling and a lot of learning about dog breeds. See the blog post "What I learned from competing against a ConvNet on ImageNet". Also a Wired article.

./convnetjs

ConvNetJS is a deep learning library written from scratch entirely in Javascript. This enables nice web-based demos that train convolutional neural networks (or ordinary ones) entirely in the browser. Many web demos included. I did an interview with Data Science Weekly about the library and some of its back story here. Also see my later followups such as tSNEJS, REINFORCEjs, or recurrentjs, GANs in JS.

./ulogme

How productive were you today? How much code have you written? Where did your time go? For a while I was really into tracking my productivity, and since I didn't like that RescueTime uploads your (very private) computer usage statistics to a cloud I wrote my own, privacy-first, tracker — ulogme! That was fun.

./misc

I built a lot of other random stuff over time. Rubik's cube color extractor, predator prey neuroevolutionary multiagent simulations, more of those, sketcher bots, games for computer game competitions #1, #2, #3, random computer graphics things, Tetris AI, multiplayer coop tetris, etc.

publications

Also on Google Scholar

misc unsorted

- Neural Networks: Zero To Hero lecture series

- My first blog, my second blog and my current blog.

- I like sci-fi. I enumerated and sorted sci-fi books I've read here.

- Justin Johnson and I held a reading group on Clubhouse. See YouTube or as podcast.

- Loss function Tumblr :D! My collection of funny loss functions.

- Some advice for undergrads and advice for those considering or pursuing a PhD.

- New York Times article covering my PhD image captioning work.

- t-SNE visualization of CNN codes for ImageNet, pretty!

- A long time ago I was really into Rubik's Cubes. I learned to solve them in about 17 seconds and then, frustrated by lack of learning resources, created YouTube videos explaining the Speedcubing methods. There's also my long dead cubing page. And a video of me at a Rubik's cube competition :)

- 0 frameworks were used to make this simple responsive website because I am becoming seriously allergic to 500-pound websites. This one is pure HTML and CSS in two static files and that's it.